This research direction focuses on formal methods and decision-making algorithms of dynamic terrestrial locomotion and aerial manipulation in complex and human-surrounded environments. We aim at scalable planning and decision algorithms enabling heterogeneous robot teammates to dynamically interact with unstructured environments and collaborate with humans.

Read more...

Existing recovery strategies often struggle with reasoning complex task logic and evaluating locomotion robustness systematically, making them susceptible to failures caused by inappropriate recovery strategies or lack of robustness. This study introduces a robust planning framework that utilizes a model predictive control (MPC) approach, enhanced by incorporating signal temporal logic (STL) specifications. This marks the first-ever study to apply STL-guided trajectory optimization for bipedal locomotion, specifically designed to handle both translational and orientational perturbations.

Trajectory optimization through contact is a powerful set of algorithms for designing dynamic robot motions involving physical interaction with the environment. However, the trajectories output by these algorithms can be difficult to execute in practice due to several common sources of uncertainty: robot model errors, external disturbances, and imperfect surface geometry and friction estimates.

Humanoid robots are designed to perform complex loco-manipulation tasks in human-centered environments. This research introduces Opt2Skill, a pipeline that integrates model-based trajectory optimization with reinforcement learning (RL) to enable versatile whole-body humanoid robot loco-manipulation.

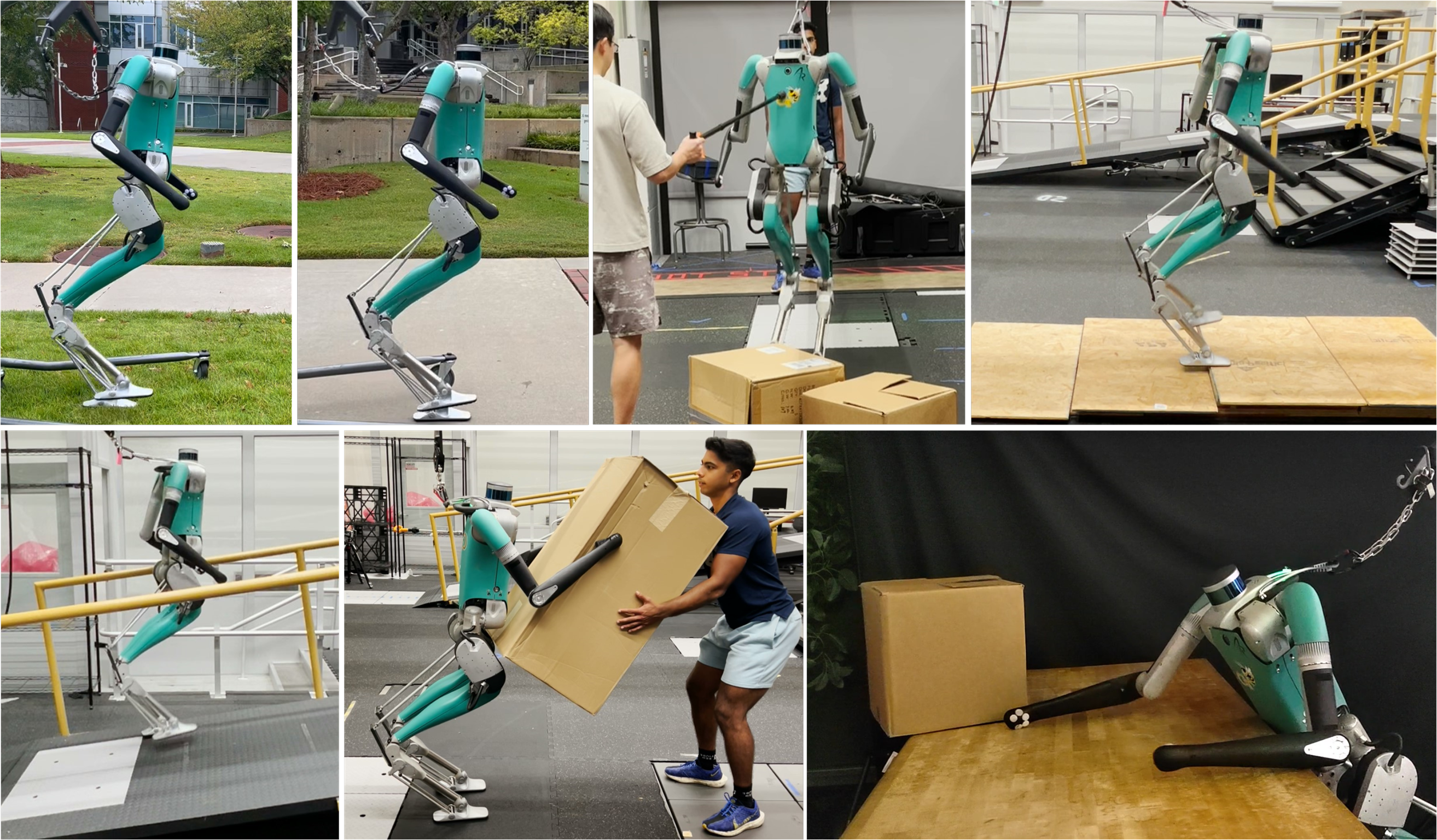

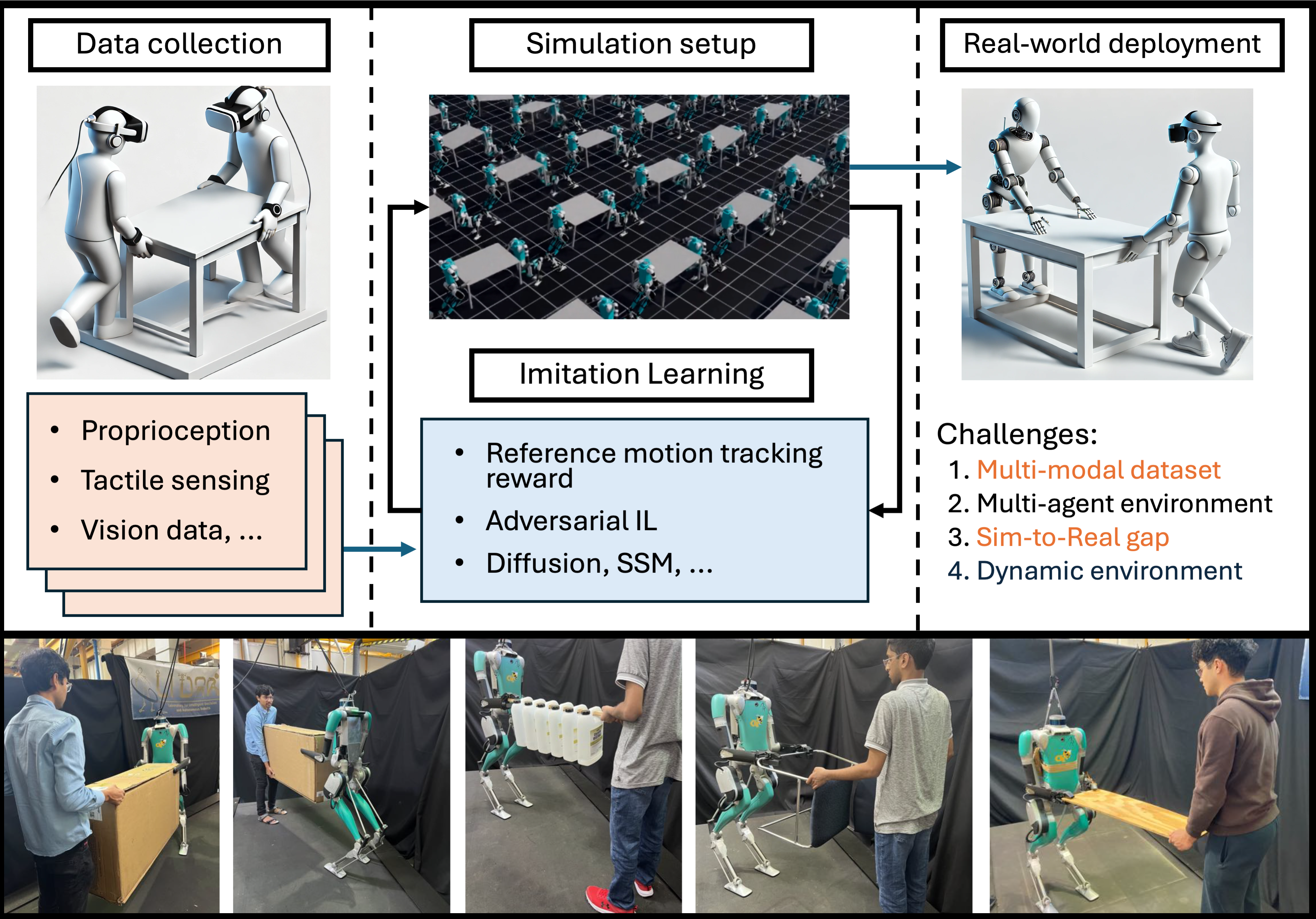

The goal of this research is to develop a whole-body control method for humanoid robots to perform collaborative transportation tasks with humans. Our approach combines imitation learning with social skill learning to enable robots to effectively coordinate with humans. We propose a simulated environment that can be used to test our approach, while developing the Sim2Real framework for transfer to a real-world collaborative task.

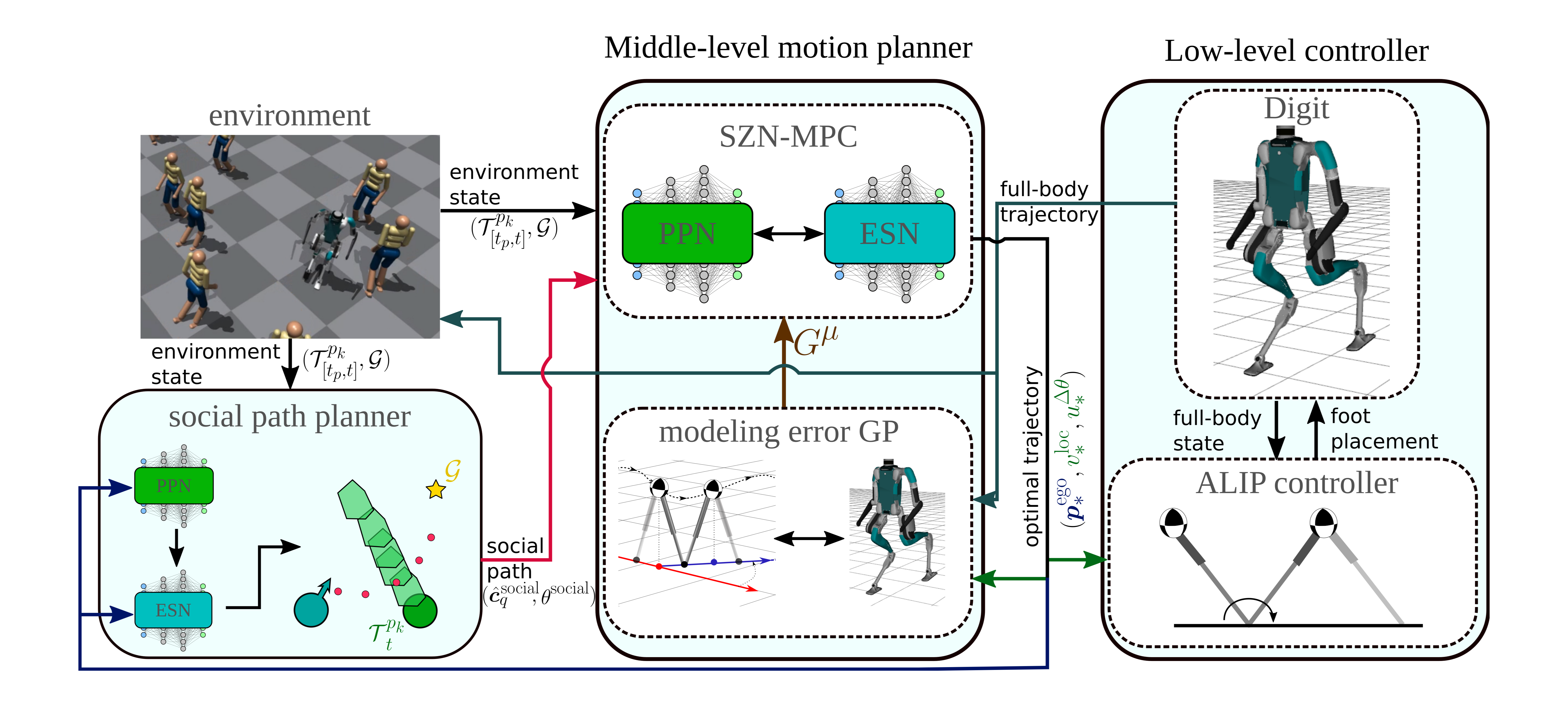

Navigating dynamic, human-crowded environments is challenging for bipedal robots due to uncertain pedestrian dynamics and social behavior considerations. This research introduces a novel framework that integrates prediction and motion planning using the Social Zonotope Network (SZN), enabling socially acceptable and collision-free bipedal robot navigation.

Read more...

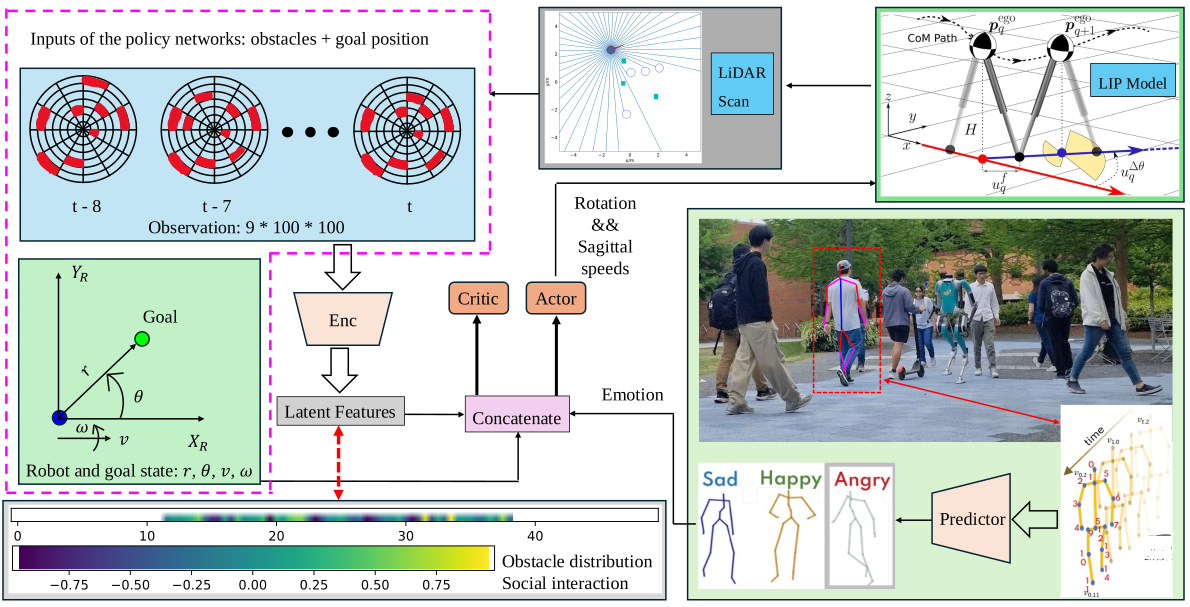

This study presents a deep reinforcement learning (DRL) based navigation framework for humanoid robots in socially interactive environments with an emotion-aware and terrain-aware manner. We represent dynamic environments with sequential LiDAR grid maps, from which we can comprehensively extract latent features including implicit collision areas and complicated social interactions.

Search and rescue missions require robotic systems capable of navigating challenging environments with complex terrains. This research introduces a framework that integrates terrain-aware Model Predictive Control (MPC) for coordinating a heterogeneous team of bipedal and aerial robots. The framework focuses on terrain-adaptive path planning, multi-robot task allocation, and real-time rescue operations.

Read more...

This project aims to develop a reinforcement learning-augmented model predictive control (RL-augmented MPC) framework designed for bipedal locomotion on challenging deformable terrain, such as gravel and sand.

Enabling bipedal walking robots to learn how to maneuver over highly uneven, dynamically changing terrains is challenging due to the complexity of robot dynamics and interacted environments. Recent advancements in learning from demonstrations have shown promising results for robot learning in complex environments. While imitation learning of expert policies has been well-explored, the study of learning expert reward functions is largely under-explored in legged locomotion. This paper brings state-of-the-art Inverse Reinforcement Learning (IRL) techniques to solving bipedal locomotion problems over complex terrains. We propose algorithms for learning expert reward functions, and we subsequently analyze the learned functions. Through nonlinear function approximation, we uncover meaningful insights into the expert's locomotion strategies. Furthermore, we empirically demonstrate that training a bipedal locomotion policy with the inferred reward functions enhances its walking performance on unseen terrains, highlighting the adaptability offered by reward learning.

This research focuses on how we can develop accompanying motion planners and navigation frameworks that allow quadrupeds to be autonomously deployed in unknown environments. We develop semantic environment representations catered to legged morphologies to aid in understanding when and where to step, fast egocentric motion planners that can handle dynamic environments, and robust whole-body controllers that allow these robots to track desired trajectories while reacting to disturbances.

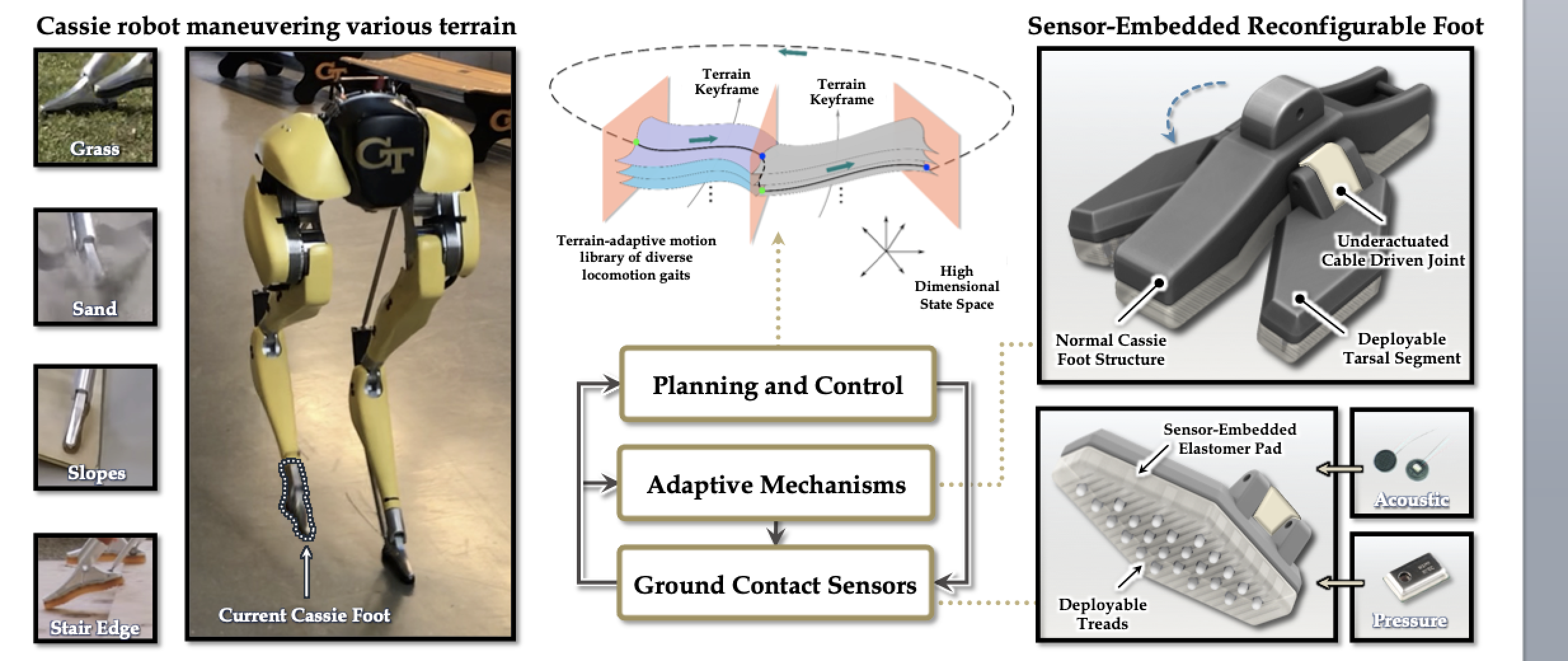

Locomotion over cluttered outdoor environments requires the contact foot to be aware of terrain geometries, stiffness, and granular media properties. Although current internal and external mechanical and visual sensors have enabled high-performance state estimation for legged locomotion, rich ground contact sensing capability is still a bottleneck to improved control in austere conditions.

Bipedal locomotion on diverse terrains is a complex challenge. Unlike controlled lab surfaces—flat, rigid, and predictable—natural terrains like sand, gravel, boulders, and grass are slippery, deformable, and can cause robots to sink. These characteristics require significant advancements in robot design and control to achieve robust locomotion. This project aims to develop an advanced robotic foot equipped with actuators and sensors, alongside upgraded control algorithms, to enable robots to walk reliably on various terrains.

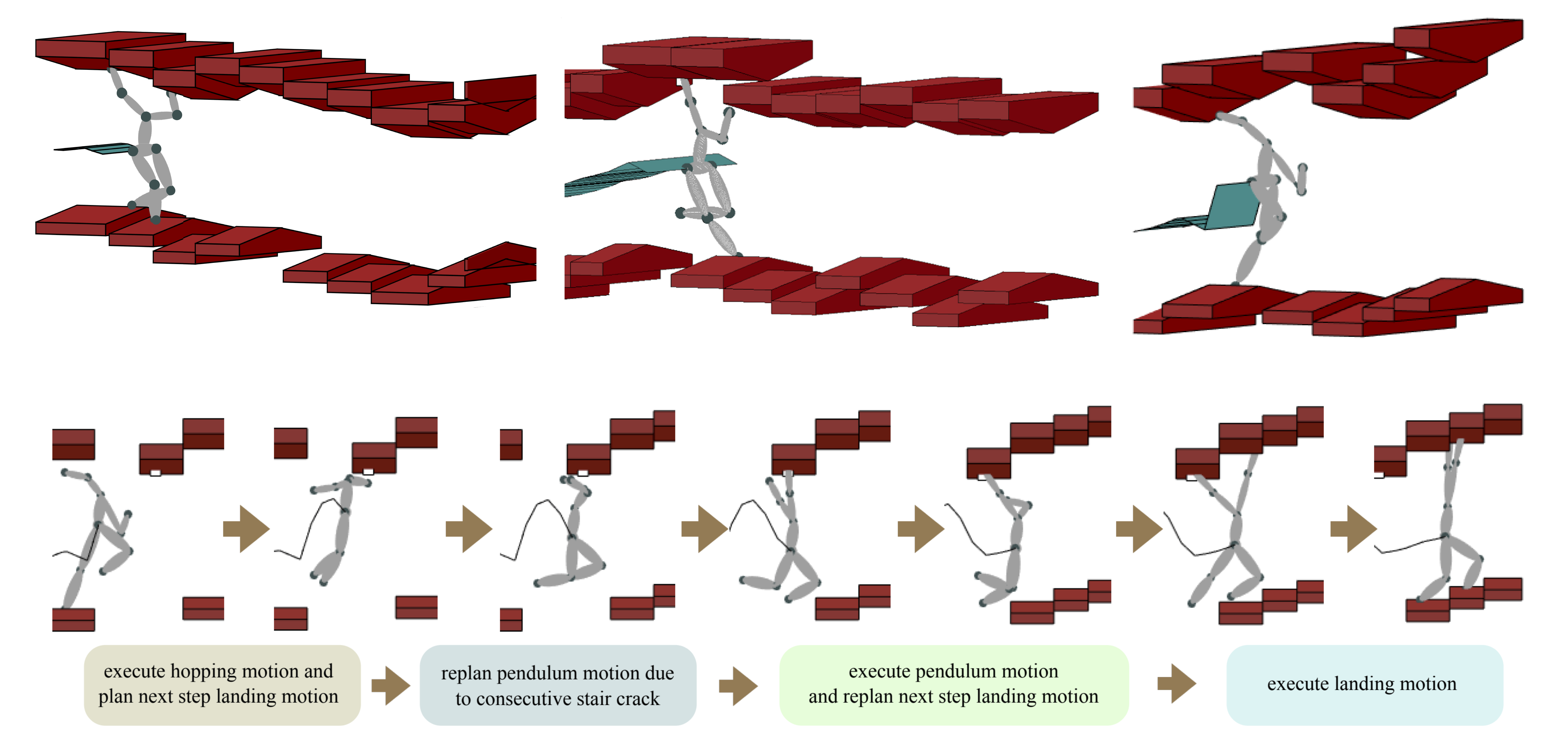

This project takes a step toward formally synthesizing high-level reactive planners for unified legged and armed locomotion in constrained environments. We formulate a two-player temporal logic game between the contact planner and its possibly adversarial environment.